Anthropic Pushed Its Most Loyal Developers Straight Into OpenAI’s arms.

OpenAI Didn’t Even Have to Ask.

A $200 per month subscription. Hundreds of thousands of enthusiastic developers. One documentation update. That’s all it took for Anthropic to hand its most engaged community directly to its biggest rival — and the most ironic part is that OpenAI collected the win without lifting a finger.

To understand how we got here, go back a few weeks.

In late January 2026, an open-source project created by Austrian developer Peter Steinberger exploded across the internet. The project was called Clawdbot, a wordplay on Claude and the lobster claw that served as its mascot. Within a single day of its official January 25th launch, it had accumulated 9,000 GitHub stars. Within weeks, that number surpassed 190,000, a growth rate that rivals even Linux’s early trajectory.

The concept was straightforward but genuinely powerful. Instead of an AI that sits in a browser tab waiting for your questions, OpenClaw acts. It runs locally on your machine, connects to your email, your calendar, your Telegram, WhatsApp, and Discord, and executes tasks autonomously. You are not asking it to think. You are telling it to do.

Steinberger himself described it as “AI that actually does things,” and the reaction from developers cleared that this framing resonated in a way that no chatbot interface had before.

Anthropic’s First Move: The Trademark Notice

On January 27, 2026, Anthropic sent a trademark notice. “Clawd” was too similar to “Claude.” Steinberger agreed immediately and renamed the project Moltbot, keeping the lobster theme since lobsters shed their shells to grow. Three days later, after deciding Moltbot never quite rolled off the tongue, he settled on OpenClaw.

That was the name that stuck.

Up to this point, the situation was completely understandable. Protecting a registered trademark is a legitimate legal obligation, and Steinberger himself raised no objections. The community moved on.

What nobody anticipated was what came next.

OpenClaw users were running their personal agents through their Anthropic subscriptions. With the Max plan at $200 a month, you get a substantial token allowance, which is more than enough to operate a powerful personal assistant for routine tasks.

Technically, the setup relied on OAuth tokens, the same authentication system that Claude Code, Anthropic’s own official developer tool, uses to connect to Claude with a subscription account.

On January 9, 2026, Anthropic silently deployed a server-side block. OAuth tokens for Free, Pro, and Max consumer plans are now restricted to use within Claude.ai and the Claude Code CLI. Point them anywhere else, and users received:

https://x.com/trq212/status/2009689809875591565?s=20

Thousands of developers woke up that morning to completely broken workflows.

Not an announcement. No transition period. No warning.

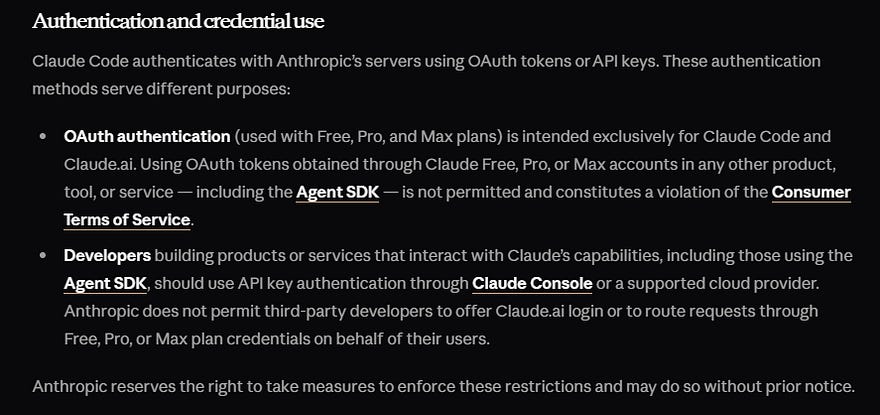

Then, in February, Anthropic updated its official documentation with language that left no room for interpretation:

That last detail, the explicit mention of the Agent SDK, was the line that ignited the community’s reaction. The Agent SDK is Anthropic’s own tool for building AI agents. The message was unambiguous: even Anthropic’s own SDK was not exempt.

No third-party tool, by any technical framing, was getting access to subscription tokens.

Why Anthropic Did It, and Why the Math Was Always Against Them

The economic logic is not hard to follow. A Claude Max subscription was never designed to handle an autonomous agent running LLM calls all day. In API terms, that kind of workload can easily cost thousands of dollars, not $200. A basic query on OpenClaw consumes approximately 50,000 tokens before the agent does anything meaningful.

Using Claude Opus 4.6’s API often led to proliferating costs, given its pricing of $15 per million input tokens and $75 per million output tokens.

Subscription models operate on the same economic logic as a gym membership. They are profitable because most subscribers do not use them intensively. OpenClaw turned every Max subscriber into a heavy user, and the unit economics collapsed. From a purely financial standpoint, Anthropic’s decision to enforce its terms of service was rational. The rule prohibiting automated access via subscription credentials had actually been in the documentation for over two years. Anthropic’s technical enforcement of the matter took place in January 2026.

Reasonable.

Defensible. And executed in a way that made Anthropic look like the villain of its own story.