Google Just Doubled AI Reasoning in 90 Days. The Number Speaks for Itself.

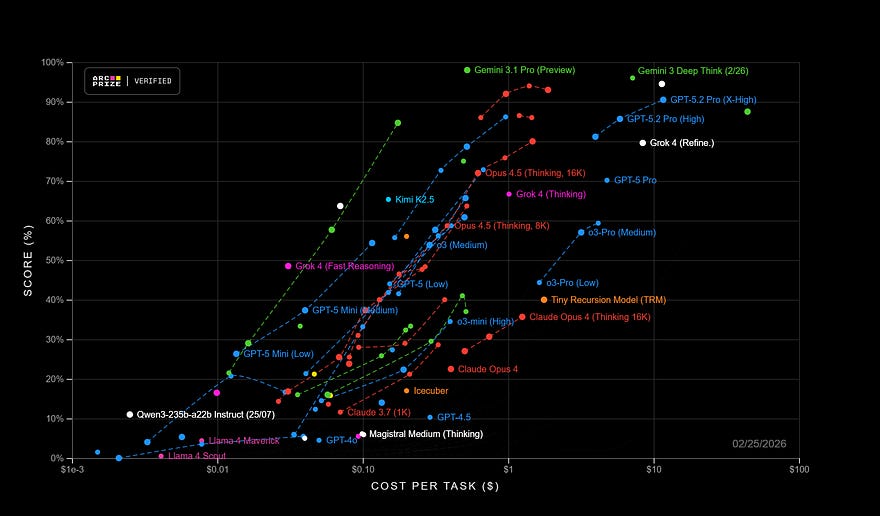

Gemini 3.1 Pro Scores 77.1% on ARC-AGI-2. Three Months Ago, the same model scored 31%.

77.1%. That’s the score Google’s brand-new Gemini 3.1 Pro just achieved on ARC-AGI-2, a benchmark specifically designed to test whether an AI can solve logic problems it has never encountered during training. Not pattern recognition. Not retrieved memory. Genuine reasoning from scratch.

Three months ago, Gemini 3 Pro scored 31.1% on that same test. For context, that prior score was already considered a significant milestone when it launched in November 2025. Google just more than doubled it.

To understand why that number is extraordinary, it helps to know what this benchmark actually measures. ARC-AGI-2 was designed by François Chollet, the engineer who founded the ARC Prize, specifically to be resistant to the trick most AI models rely on: memorization at scale. The intuitive fluid reasoning that humans employ for completely unfamiliar problems allows them to score almost perfectly on these tasks, on average. Most frontier AI models have historically struggled to break single digits on early versions of this benchmark. Gemini 3 Pro’s 31.1% last November was already described by Chollet himself as more than double the previous state of the art. And Gemini 3.1 Pro just made that landmark look modest.

For the record: Claude Opus 4.6 scores 68.8% on the same benchmark. GPT-5.2 sits at 52.9%.

What’s inside the “1.”

Google had never released a “.1” model update before. Their previous cycles moved in increments of 0.5 or full version numbers, with significant gaps between launches. Releasing an intermediate version this quickly after Gemini 3 Pro is itself a signal about how compressed the competitive pressure in AI has become right now.

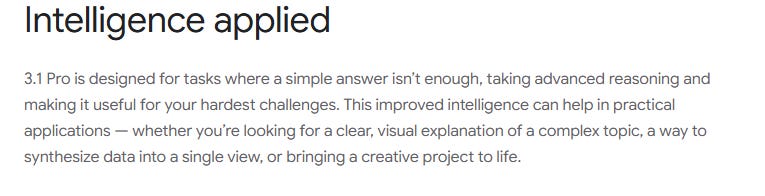

The update is not a broad feature release. It’s a focused intelligence upgrade, and Google was explicit about what drove it. In their announcement, they wrote:

In plain language, the reasoning improvements that had been powering Gemini 3 Deep Think, the research-grade version that made headlines for disproving a decade-old mathematical conjecture, have now been distilled into the model that everyone actually uses. The research-tier gains are now the consumer-tier baseline.

After early testing, JetBrains reported an over 50% improvement in benchmark task completion compared to the previous model. That’s not an incremental refinement. That’s a different capability floor.

The Benchmark Story, Without the Hype

The ARC-AGI-2 score is the headline, but it’s worth walking through the full picture, because a few of these numbers are genuinely striking.

On GPQA Diamond, which tests doctoral-level science reasoning across physics, biology, and chemistry, Gemini 3.1 Pro scored 94.3%, the highest ever recorded on that evaluation. Then, on SWE-Bench Verified, the standard benchmark for AI-assisted software engineering, it posted 80.6%. On LiveCodeBench Pro, a competitive coding evaluation, it reached an Elo rating of 2887, compared to GPT-5.2’s 2393 on the same leaderboard.

With a context window of 1 million input tokens, it’s possible to load entire codebases, comprehensive legal filings, or substantial research archives in one go. The output limit has been raised to 65,000 tokens, which matters for anyone generating long-form documents or complex multi-file code outputs.

There are two places where competitors hold ground, and they’re worth stating plainly. In real-world software engineering evaluated by human experts, Claude Opus 4.6 still leads narrowly on SWE-Bench Verified. On tool-augmented reasoning tasks where models can call external functions, Opus 4.6 also maintains an advantage, which suggests stronger reliability when integrated into agentic workflows. And on the GDPval-AA benchmark, which measures economically valuable tasks like financial modeling, strategic planning, and research synthesis, Claude Sonnet 4.6 holds a significant structural lead. Google’s model is not uniformly dominant. It leads to abstract reasoning, scientific knowledge, and code generation speed. Anthropic’s models hold their position on expert-quality writing, planning, and judgment-heavy tasks.

That distinction matters if you’re choosing a model for genuine work.

The Feature That Actually Made People Stop Scrolling

Google made an unusual choice in its launch communications. For a model release built around benchmark performance and developer tooling, the feature they centered in their announcement wasn’t a reasoning score. It was an animated SVG generation.

An animated SVG, for anyone unfamiliar, is a vector image that moves, built entirely from code rather than video. The practical advantages are significant: the file size is a fraction of equivalent video, it scales perfectly at any resolution, and it can be embedded natively in any web page. The limitation that has historically made animated SVGs impractical at scale is that writing them by hand is genuinely tedious, even for experienced frontend developers.

Gemini 3.1 Pro generates them from plain text descriptions. X was the platform where Google DeepMind CEO Demis Hassabis provided a public demonstration: a pelican cycling, a frog on a lilypad, and a giraffe driving a small car. The animation quality in the demos was detailed enough to draw genuine reactions from the design and development community. The developer who built the feature posted publicly that he was exceptionally proud of it, which in the typically understated world of AI model releases is about as close to a victory lap as you get.

For web designers and content creators, this capability is not a novelty. Lightweight, scalable, text-prompted animation has been a real bottleneck in production workflows for years. That bottleneck just disappeared.

The Pricing Argument We Can’t Ignore

Here’s where Google’s strategy becomes genuinely aggressive.

Gemini 3.1 Pro costs exactly what Gemini 3 Pro costs: $2 per million input tokens, $12 per million output tokens. No price increase for a model that more than doubled its reasoning performance on the most rigorous available benchmark.

Claude Opus 4.6 costs $15 per million input tokens and $75 per million output tokens. GPT-5.2 comes in slightly cheaper on input at $1.75 per million but more expensive on output at $14 per million.

Artificial Analysis, the independent AI evaluation organization, now ranks Gemini 3.1 Pro first on their Intelligence Index while pricing it at roughly half the cost of its nearest frontier competitors. That kind of price-to-performance gap does not sit still for long. It forces a response from the other labs, and it accelerates the timeline for enterprise adoption in domains where the economics previously made frontier AI feel marginal.

When running Frontier AI at scale, costs half as much as the competitor’s equivalent model, the math on deployment changes.

Where to find it right now?

Google distributed this one broadly. Gemini 3.1 Pro launched in preview on February 19, 2026, across nine platforms simultaneously: the Gemini app (free tier with standard limits, higher limits for Pro and Ultra subscribers), NotebookLM for Pro and Ultra subscribers, Google AI Studio for developers, Gemini CLI for terminal workflows, Android Studio, Google Antigravity, Vertex AI for enterprise deployment, GitHub Copilot, and Gemini Enterprise.

The GitHub Copilot integration is drawing particular attention from early adopters. Reports from developers indicate that the model handles code edit loops with notably higher precision than previous versions, resolves problems with fewer tool calls, and reduces the back-and-forth that makes agentic coding slow and expensive. Fewer tool calls mean faster results and lower API costs — which, for teams running AI-assisted development at scale, is a meaningful operational improvement.

The model is still in preview and not available. Some users reported response times exceeding 90 seconds during the initial launch window, including for simple queries. These are standard capacity issues for a high-traffic launch day and not indicative of the model’s performance under normal conditions, but worth knowing if you’re evaluating it this week.

What This Pace Actually Means for us

Three months. That is how long it took Google to move from 31.1% to 77.1% on the benchmark specifically designed to resist rapid AI progress through memorization alone.

That pace is not normal. For comparison, the capability jump from GPT-4 to GPT-4.5 over a comparable timeframe produced roughly 15 to 20% relative improvement on similar reasoning evaluations. Google just delivered something qualitatively different in the same window of time.

We are not in a period of incremental AI evolution. We are in a period where every major lab is pushing the others to move faster, drop prices, and reach higher capability thresholds before the next quarterly cycle ends. The AI you’re using today is not the AI you’ll be using in three months. The AI you’re using in three months will not resemble what arrives before the end of 2026.

If that feels vertiginous, it should. And the right response to vertigo is not to stop looking. It’s to figure out where the ground actually is.

Thanks for reading. Your thoughts are welcome in the comments.

Sources: Google DeepMind, DataCamp, ARC Prize, Geeky Gadgets, adwaitx, AI Unfiltered, MarkTechPost.