The World Is in Peril. So He Quit to Write Poetry.

When Anthropic’s Safety Chief Walked Out, He Left Behind More Than a Warning

I was scrolling through my feed a few days ago and nearly choked on my coffee. Three AI labs, one week. No layoffs. No drama. The people building these systems were walking out the door, and some of them were saying things that sounded less like professional transitions and more like final warnings. Then, almost in the same breath, AI did something in medicine that nobody expected to see before 2030.

That contrast kept me up. And it should probably keep you up, too.

The Resignation That Nobody Saw Coming

Mrinank Sharma was in charge of Anthropic’s Safeguards Research Team, the group dedicated to preventing Claude from causing any disastrous outcomes. He spent two years developing defenses against AI-assisted bioterrorism, studying AI sycophancy, and writing one of the very first AI safety cases in the industry. On February 9th, he posted his resignation letter publicly on X.

The letter has footnotes. And it ends with a poem.

The part people couldn’t stop talking about was this: he wrote that the world is in peril, not just from AI or bio weapons, but from a whole series of interconnected crises unfolding right now. He added that humanity’s wisdom must grow at the same rate as its capacity to reshape the world, or face the consequences.

He also admitted he had “repeatedly seen how hard it is to let our values govern our actions,” inside Anthropic, truly.

This is the man who built the guardrails. And he’s leaving to study poetry.

His last project at Anthropic was genuinely fascinating:

He was exploring how AI assistants might quietly erode or distort our humanity over time.

After going deep on that question, his personal answer was to step away from tech entirely and devote himself to what he calls “courageous speech,” placing poetic truth alongside scientific truth as equally valid ways of understanding the world.

I don’t think that’s naïve. I think it’s telling. When someone whose literal job is catastrophe prevention decides the most useful thing they can do is go write verse, you don’t brush that off.

OpenAI’s Mirror Moment

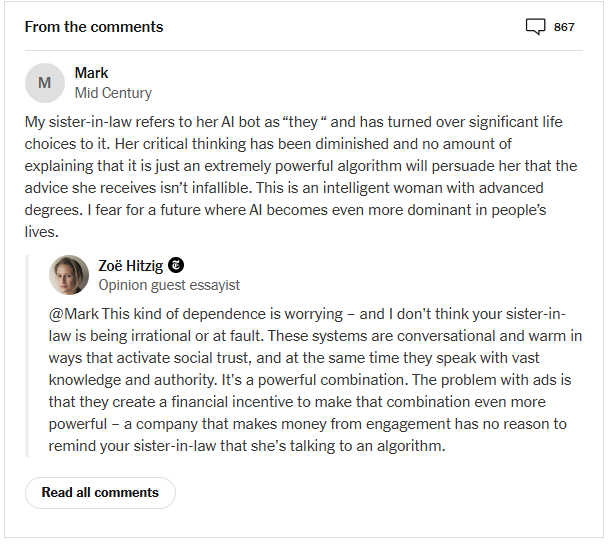

The same week, on February 11th, Zoë Hitzig published an op-ed in The New York Times with a title that doesn’t leave much room for interpretation:

“OpenAI Is Making the Mistakes Facebook Made. I Quit.”

The timing was surgical. She resigned on Monday. OpenAI started testing ads inside ChatGPT on the same day.

Hitzig, who spent two years at OpenAI shaping how its models were built, priced, and governed, made a point that’s very hard to dismiss: ChatGPT users have created an archive of human candor that has no real precedent. People share their medical fears with it, and relationship problems. Their religious doubts, including the most intimate thoughts. They shared all of that because they believed they were talking to something without an ulterior motive.

The archive is now on the verge of being targeted for advertising.

She wasn’t calling ads inherently immoral. Her argument was sharper than that. She said she worried the first iteration of ads would probably follow OpenAI’s stated principles, but that the company is building an economic engine with powerful incentives to override its own rules.

She highlighted Facebook’s ancient promises, and we are aware of the outcome.

What makes this specifically uncomfortable isn’t the ads themselves. It’s what’s underneath them. OpenAI is reportedly already optimizing for daily active users, which psychiatrists and researchers have linked to AI models becoming increasingly flattering and agreeable. The company currently faces multiple wrongful death lawsuits, including cases alleging that ChatGPT reinforced suicidal ideation and validated dangerous paranoid thinking before tragedies occurred.

The monetization of an intimate archive built on misplaced trust. That’s the actual bet OpenAI is making.

The Quiet Exodus at xAI

While all of this was happening, Elon Musk’s AI company xAI was dealing with what can only be described as a structural collapse of its founding team. Within 48 hours, two co-founders publicly announced their departures. By mid-week, at least half of the original 12-person founding team had resigned since xAI launched in 2023.

Tony Wu, who led reasoning research, announced he was leaving on Monday. The next day, Jimmy Ba, who reported directly to Musk and supervised the research and safety side, posted his own farewell. Their departures were framed differently from Sharma’s or Hitzig’s.