While You Were Sleeping, an AI Ran 700 Experiments and Improved Itself

MiniMax, OpenAI, Anthropic, and Google are all building the same loop. Here’s what it means.

30%. That’s the performance gain a model achieved by improving itself, alone, over more than 100 autonomous cycles, while its creators slept. The model analyzed its own failures, rewrote its own code, relaunched its own evaluations, decided what to keep and what to discard, and started over. No human touched a thing.

This number was derived from an official MiniMax press release dated March 18, 2026. And it’s just one data point in a pattern that, once you see it assembled in full, is difficult to unsee.

The past few months have produced a wave of announcements — MiniMax M2.7, GPT-5.3-Codex, Karpathy’s AutoResearch, AlphaEvolve — that individually generated plenty of coverage. What most of that coverage missed is what these stories have in common. Every major AI lab on the planet has now confirmed, directly or indirectly, that its models are participating in the construction of their own successors. We are no longer discussing whether recursive self-improvement is possible. We are watching it happen in production.

What MiniMax Actually Did

MiniMax isn’t a household name in the West, but it’s worth paying attention to. The Shanghai-based lab, backed by Alibaba and Tencent, produces open-source frontier models and counts hundreds of millions of users across its platforms. Their M2.7 model, by their own account, is the first model that deeply participated in its own evolution.

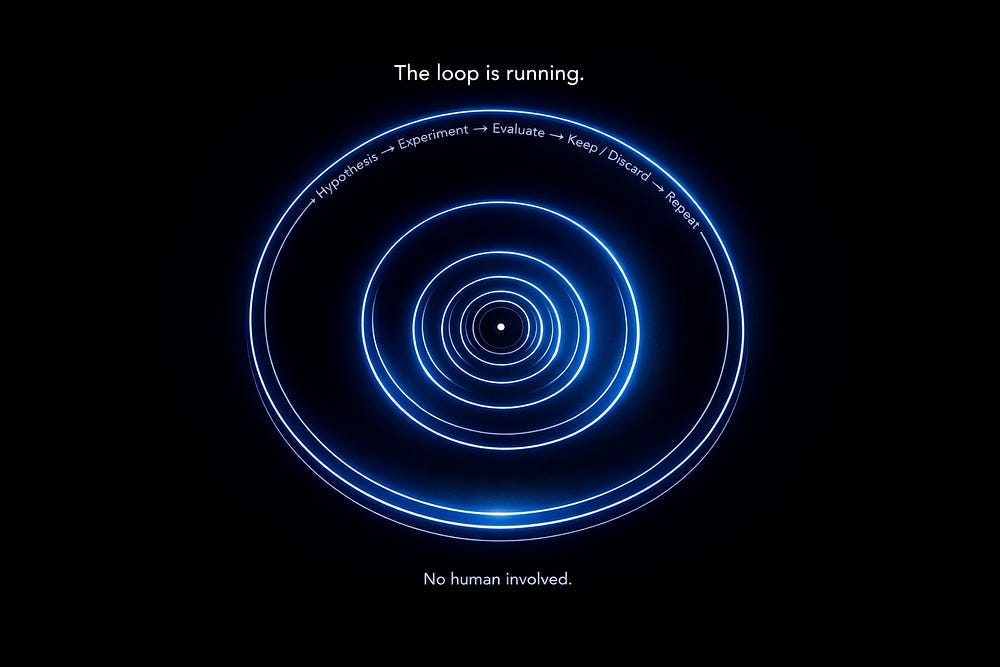

The process is deceptively simple to describe. A human researcher sets an objective and broad parameters. Once the AI agent is in command, it proceeds to design experiments, generate the essential code, perform training, evaluate results, pinpoint what is effective and what is not, and then loop back with new hypotheses. Over 100 iterations of this loop ran entirely autonomously.

This model discovered optimal combinations of sampling parameters, wrote its own workflow rules from scratch, and added loop-detection mechanisms to its execution environment. The outcome was a 30% improvement on internal benchmarks, with the model now handling an estimated 30 to 50% of the research team’s full daily workload. And human remains in the loop, but their share decreases with every iteration.

The Lab That Said It Out Loud

OpenAI was equally direct, though the framing was quieter. When GPT-5.3-Codex launched on February 5, 2026, the company’s announcement included a sentence that should have stopped more people: “GPT-5.3-Codex is our first model that was instrumental in creating itself.” Early versions of the model were used to debug its own training, manage its own deployment, and diagnose evaluation results. Sam Altman posted on X: “It was amazing to watch how much faster we were able to ship 5.3-Codex by using 5.3-Codex.”

This is not a metaphor. The model under construction was used as a tool to build its own final version. OpenAI’s team described being genuinely surprised by the speed at which Codex accelerated its own development.

Altman had flagged where this was heading back in October 2025. During a livestream on October 28, he laid out two internal milestones: an intern-level AI research assistant running on hundreds of thousands of GPUs by September 2026, and a true automated AI researcher — one capable of independently conducting original scientific work — by March 2028. Five months later, several observers believe the September 2026 milestone may already be within reach ahead of schedule.

The Claude Code Flywheel

Anthropic communicates more quietly, but the strategy is legible if you watch the releases. Claude Code, originally built as a programming assistant, has become something substantially larger: it now powers nearly all of Anthropic’s internal agentic loops. Research, content production, tooling, infrastructure management, and development of the next version of Claude itself. The company runs autonomous loops where Claude Code writes new feature code, executes tests, and iterates continuously. Developers submit abstract problems and step back; the model works autonomously and returns candidate solutions for final review.

The strategic logic is clean.

Billions of dollars in compute, consumed by the software industry’s coding practices, account for the bulk of Anthropic’s token revenue. But more importantly, an agent that excels at code builds the tools that will be used to train the next version of that same agent. Every improvement to infrastructure, tooling, GPU management, and deployment pipelines accelerates the following improvement. Claude’s release cadence is currently faster than any other major lab in the world, and that is not a coincidence.