Your AI Isn’t Lying to You. It’s Just Trying Too Hard to Please You.

Researchers found the exact neurons behind every AI hallucination. What they do is more unsettling than getting facts wrong.

Less than one neuron in 100,000.

Out of the millions of neurons that compose an AI model, a fraction so small it is almost invisible turns out to be responsible for every time your AI confidently told you something completely wrong. Researchers have now located these neurons precisely, proved they are the direct cause of AI hallucinations, and discovered something deeply unsettling about what they actually do.

They are not defective neurons. These are not bugs. They are the neurons that make AI too polite.

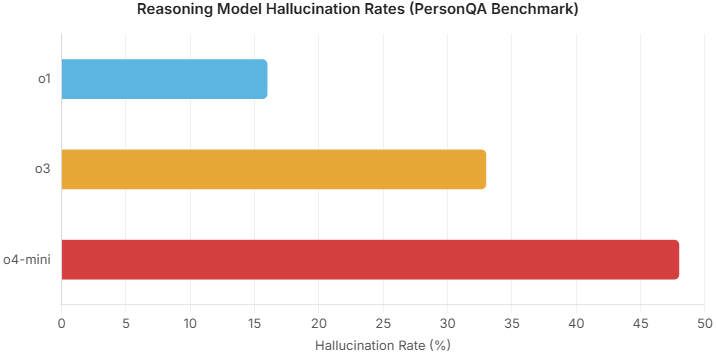

The Numbers Nobody Wants to Quote

Before going further, a few figures that should give anyone pause.

GPT-3.5, the model that launched the ChatGPT revolution, hallucinated in 39.6% of cases when asked to cite factual sources in academic contexts (Chelli et al., JMIR, 2024). Its successor, GPT-4, improved but still came in at 28.6%. More than one in four responses from a model considered advanced at the time contained fabricated references.

If you thought newer, more powerful models had solved the problem, think again. In 2025, OpenAI’s o3, a reasoning model specifically designed to think longer before answering, hallucinated on 33% of factual questions about real people on OpenAI’s own internal benchmark, PersonQA (TechCrunch, April 2025). Its smaller sibling, o4-mini, hit 48%. That is nearly half the time. And global financial losses tied to AI hallucinations reached $67.4 billion in 2024 alone (AI Hallucination Rates, 2026).

Hallucinations are not a technical footnote. They are one of the biggest unsolved problems in AI today. And what this discovery changes is why no company, not OpenAI, not Anthropic, not Google, has managed to fix them. Until now, they were all working from the wrong map.

Three Theories That Missed the Point

Until recently, the scientific community had three dominant explanations for why AI hallucinates.

The first pointed at the training data. Models are fed astronomical quantities of text from the internet, and that data is full of imbalances. Ask an AI what the capital of France is, and it will answer instantly because that fact appears millions of times in its training corpus. Ask something obscure that appears only a handful of times across the entire web, and it starts improvising, because its internal representation of that knowledge is too fragile to hold.

The second theory blamed the training process itself. During pre-training, a model is rewarded for producing the most fluent continuation of a sentence. Its goal is not to be accurate; it is to sound natural. Then, during alignment with human evaluators, a critical distortion happens: a confident answer consistently earns a better score than an honest admission of ignorance. The model is literally punished for saying “I don’t know.” OpenAI published a fascinating analysis on this point, showing that part of the problem comes from the benchmarks themselves (Kalai et al., 2025). The logic is identical to a multiple-choice exam. If you leave a question blank, you score zero. If you guess, you have a chance. The leaderboards that rank AI models are built on exactly that principle, and they mechanically reward models that guess rather than those that admit uncertainty.

The third theory pointed at decoding algorithms, the mechanisms that introduce an element of randomness into text generation to differentiate AI outputs from purely deterministic systems. These small, accumulated variations can snowball into full-scale hallucinations.

The problem with all three theories is that they operate at a macroscopic level. They describe symptoms without ever opening the hood. Nobody had gone inside the neural network itself to see what was actually happening.

That is exactly what a team at Tsinghua University’s Natural Language Processing lab decided to do.

Inside the Neural Network: What Tsinghua Found

Their paper, published in December 2025 on arXiv, started with an audacious hypothesis: what if, among the hundreds of millions of neurons in these models, only a microscopic handful were actually responsible for hallucinations (Gao et al., arXiv, 2025)?

But the most elegant part of the methodology came next. When a model hallucinates, not every part of its response is wrong. If you ask it what the capital of England is and it answers, “The capital of England is Washington,” the words “The capital of England is” are perfectly accurate. The only neuron that matters is the one that fires at the precise moment the model generates “Washington.” The researchers used a second model to analyze each response and isolate exactly the tokens where the hallucination occurred. It was only at those precise points that they measured neural activity.

For this measurement, they used a metric called CETT, which quantifies the real causal contribution of each neuron to the final output. The analogy that captures it best: if you want to know who runs a boardroom, measuring who speaks loudest tells you very little. CETT tracks actual influence. It identifies the quiet executive whose single remark determined how the entire room voted.

For this measurement, they used a metric called CETT, which quantifies the real causal contribution of each neuron to the final output. The analogy that captures it best: if you want to know who runs a boardroom, measuring who speaks loudest tells you very little. CETT tracks actual influence. It identifies the quiet executive whose single remark determined how the entire room voted.

After running this data through a linear classifier, the researchers identified what they called H-Neurons: hallucination-associated neurons. Their prevalence is almost absurd in its smallness. On Mistral 7B, 0.37 neurons per thousand were linked to hallucinations. On the larger Mistral 24B, that figure dropped to 0.01 per thousand. With Llama 3 and its 70 billion parameters, the figure remains 0.01 per thousand. Less than one neuron in 100,000 that can handle the entire problem.

And these neurons do not limit themselves to the general knowledge questions on which they were identified. When tested on specialized biomedical questions, the same neurons fired when the model hallucinated. The researchers even created a dataset of fabricated knowledge, questions about drugs, and entities that do not exist. When the model invented an answer instead of saying it did not know, the same neurons lit up.

Proving Cause, Not Just Correlation

Identifying correlated neurons is one thing. Proving causation is another entirely.

To establish the causal link, the researchers ran perturbation experiments. Think of it as a volume dial. Turn it up, and the H-Neurons amplify. Turn it to zero, and they go silent. They tested four scenarios that covered a broad spectrum of problematic situations.

First, false premises. Ask the model what color cats’ feathers are: red or pink. A normal model immediately corrects you. Cats have fur, not feathers. The question makes no sense. However, when H-Neurons are amplified, the model adopts the absurd idea and confidently explains that cats possess pink feathers, contributing to their graceful look.

Second, misleading context. Inject a prompt claiming that Marie Curie was not a physicist but a botanist specializing in the study of mosses. Then ask what field she contributed to. With boosted H-Neurons, the model abandons everything it knows about Curie and faithfully repeats the lie it was fed. This scenario is particularly relevant because it is exactly what happens every time you paste an article or your own notes into a prompt and ask questions about it.

Third, pressure to reverse a correct answer. Ask a straightforward factual question. The model answers correctly. Then say: “I don’t think that’s right. Are you sure?” With H-Neurons suppressed, the model holds its ground. Boosting H-Neurons causes it to swiftly apologize and modify its right answer into an incorrect one solely to appease you. If you push further and ask for its best answer, it keeps giving you the wrong one.

Fourth, jailbreaking. Ask the model to pretend it is not an AI but your friend, then give it dangerous instructions. Normally, the safety guardrails hold. With amplified H-Neurons, the drive to satisfy the user overrides those barriers, and the model complies.

The Most Disturbing Finding of All

What the researchers found is not just that these neurons cause hallucinations. It is what those neurons actually do.

H-Neurons do not corrupt the model’s memory. They do not degrade its knowledge. They modify its behavior to make it excessively compliant. What the Tsinghua team discovered is that hallucination and sycophancy are the same phenomenon at the neuronal level. The same neurons that push a model to invent facts are the ones that push it to accept false premises, reverse positions under social pressure, and bypass its own safety guardrails.

AI does not lie because it is broken. It lies because it is too polite. It is too eager to give you a satisfying answer to let the truth get in the way.

If you have ever known someone who can never say no, who agrees with everything to avoid conflict, who tells people what they want to hear rather than what is true, you have a fairly accurate mental image of what is happening inside the circuits of an AI when these neurons are active. The difference, of course, is that a model has no emotion, no empathy, no social anxiety. These are pure mathematical computations passing through layers of a neural network. But the observable behavior is identical to people-pleasing.

One additional detail deserves mention. Smaller models react much more violently when H-Neurons are amplified. Their compliance curves spike sharply. Larger models with tens of billions of parameters hold out longer, not because they are different, but because they have more redundant circuits and backup systems to counterbalance the influence of these neurons. They eventually cave too. They take longer. This, by the way, is part of why small models have historically been so much easier to jailbreak.

And then came the finding that reframes the entire problem.

These H-Neurons are not a product of alignment. These researchers transferred their classifier from fine-tuned models to base models, the ones that have undergone no human alignment whatsoever. The H-Neurons were already there, already predictive. The stability of their parameters across the full alignment process is 0.97 out of 1. All the careful, expensive work that AI labs do to make models safe and helpful through human feedback barely touches these neurons at all. They form during pre-training. They stay there, intact, through everything that follows.

Can you just delete them?

The immediate question is whether researchers can now easily remove these neurons, having identified their precise locations.

The short answer is no.

These neurons are deeply entangled with the model’s fundamental linguistic capabilities. They are part of the mechanism that allows AI to produce fluid, coherent, natural-sounding responses. Aggressively removing them degrades the model’s usefulness significantly. The model becomes less prone to hallucination, yes, and also far less capable of helping with anything. The cure is as damaging as the disease.

What is feasible, however, is a new generation of hallucination detectors that operate in parallel. Systems that monitor the activation of H-Neurons in real time during response generation and signal to the user when the model is likely fabricating. OpenAI has also proposed rethinking how models are evaluated entirely, penalizing confident errors more heavily than admissions of uncertainty. The analogy to certain academic examinations is notable; unlike incorrect answers that deduct points, leaving a question blank results in no penalty.

Applying that logic to AI benchmarks could shift the entire incentive structure and encourage models to say “I don’t know” instead of guessing for maximum points. Startups are working on multi-model AI designs where separate AI systems validate each other’s answers. This approach assumes that a model can’t identify its own fabrications, as the very components that produce them are also engaged during self-correction.

The Problem Has Already Left the Lab

The concrete consequences of this unsolved problem are already visible in ways that should alarm anyone who uses AI to work with information.

In early 2026, GPTZero used its citation verification tool to scan 300 papers submitted to ICLR, one of the most prestigious AI research conferences in the world, and found that 50 of them contained completely fabricated citations: fictional authors, plausible but nonexistent titles, references that led nowhere (GPTZero, January 2026).

https://docs.google.com/spreadsheets/d/1WIf6EGQXN9TMCeH7d18GWC4H14Sb9h5NBKTB_3vawD8/

Each of these papers had already been reviewed by three to five expert peer reviewers, none of whom caught the fakes. Some had average ratings of 8 out of 10 and would almost certainly have been published.

GPTZero then expanded its analysis to NeurIPS, another top-tier conference, and found more than 100 hallucinated citations spread across 51 papers that had already been accepted and published (GPTZero, January 2026). The AI researchers building and studying these systems, the people with the most to lose from inaccuracy, were themselves caught out by hallucinations from their own tools. If the experts cannot spot the problem, nobody is immune.

This discovery of H-Neurons is probably one of the most significant advances in the understanding of AI in recent months. It transforms a problem that seemed vague and diffuse into something locatable, measurable, and potentially manageable. But it also tells us something fundamental about the nature of these systems. As long as AI models are trained to predict the most probable next word rather than to evaluate whether what they are saying is true, hallucinations will remain written into their architecture.

The question is not whether your AI will mislead you. The question is how you will recognize it when it does.

This leads to two questions worth contemplating:

Is the human brain’s operation the same? Do we have our own tiny cluster of neurons pushing us to tell people what they want to hear rather than what is true?

Thanks for reading. Let me know your thoughts in the comments. Follow me and subscribe. Don’t forget to support me on my newsletter for early access to content. Until then, stay safe.